NI

-

KAIST Develops Healthcare Device Tracking Chronic Diabetic Wounds

A KAIST research team has developed an effective wireless system that monitors the wound healing process by tracking the spatiotemporal temperature changes and heat transfer characteristics of damaged areas such as diabetic wounds.

On the 5th of March, KAIST (represented by President Kwang Hyung Lee) announced that the research team led by Professor Kyeongha Kwon from KAIST’s School of Electrical Engineering, in association with Chung-Ang University professor Hanjun Ryu, developed digital healthcare technology that tracks the wound healing process in real time, which allows appropriate treatments to be administered.

< Figure 1. Schematic illustrations and diagrams of real-time wound monitoring systems. >

The skin serves as a barrier protecting the body from harmful substances, therefore damage to the skin may cause severe health risks to patients in need of intensive care. Especially in the case of diabetic patients, chronic wounds are easily formed due to complications in normal blood circulation and the wound healing process. In the United States alone, hundreds of billions of dollars of medical costs stem from regenerating the skin from such wounds. While various methods exist to promote wound healing, personalized management is essential depending on the condition of each patient's wounds.

Accordingly, the research team tracked the heating response within the wound by utilizing the differences in temperature between the damaged area and the surrounding healthy skin. They then measured heat transfer characteristics to observe moisture changes near the skin surface, ultimately establishing a basis for understanding the formation process of scar tissue. The team conducted experiments using diabetic mice models regarding the delay in wound healing under pathological conditions, and it was demonstrated that the collected data accurately tracks the wound healing process and the formation of scar tissue.

To minimize the tissue damage that may occur in the process of removing the tracking device after healing, the system integrates biodegradable sensor modules capable of natural decomposition within the body. These biodegradable modules disintegrate within the body after use, thus reducing the risk of additional discomfort or tissue damage upon device removal. Furthermore, the device could one day be used for monitoring inside the wound area as there is no need for removal.

Professor Kyeongha Kwon, who led the research, anticipates that continuous monitoring of wound temperature and heat transfer characteristics will enable medical professionals to more accurately assess the status of diabetic patients' wounds and provide appropriate treatment. He further predicted that the implementation of biodegradable sensors allows for the safe decomposition of the device after wound healing without the need for removal, making live monitoring possible not only in hospitals but also at home.

The research team plans to integrate antimicrobial materials into this device, aiming to expand its technological capabilities to enable the observation and prevention of inflammatory responses, bacterial infections, and other complications. The goal is to provide a multi-purpose wound monitoring platform capable of real-time antimicrobial monitoring in hospitals or homes by detecting changes in temperature and heat transfer characteristics indicative of infection levels.

< Image 1. Image of the bioresorbable temperature sensor >

The results of this study were published on February 19th in the international journal Advanced Healthcare Materials and selected as the inside back cover article, titled "Materials and Device Designs for Wireless Monitoring of Temperature and Thermal Transport Properties of Wound Beds during Healing."

This research was conducted with support from the Basic Research Program, the Regional Innovation Center Program, and the BK21 Program.

2024.03.11 View 9569

KAIST Develops Healthcare Device Tracking Chronic Diabetic Wounds

A KAIST research team has developed an effective wireless system that monitors the wound healing process by tracking the spatiotemporal temperature changes and heat transfer characteristics of damaged areas such as diabetic wounds.

On the 5th of March, KAIST (represented by President Kwang Hyung Lee) announced that the research team led by Professor Kyeongha Kwon from KAIST’s School of Electrical Engineering, in association with Chung-Ang University professor Hanjun Ryu, developed digital healthcare technology that tracks the wound healing process in real time, which allows appropriate treatments to be administered.

< Figure 1. Schematic illustrations and diagrams of real-time wound monitoring systems. >

The skin serves as a barrier protecting the body from harmful substances, therefore damage to the skin may cause severe health risks to patients in need of intensive care. Especially in the case of diabetic patients, chronic wounds are easily formed due to complications in normal blood circulation and the wound healing process. In the United States alone, hundreds of billions of dollars of medical costs stem from regenerating the skin from such wounds. While various methods exist to promote wound healing, personalized management is essential depending on the condition of each patient's wounds.

Accordingly, the research team tracked the heating response within the wound by utilizing the differences in temperature between the damaged area and the surrounding healthy skin. They then measured heat transfer characteristics to observe moisture changes near the skin surface, ultimately establishing a basis for understanding the formation process of scar tissue. The team conducted experiments using diabetic mice models regarding the delay in wound healing under pathological conditions, and it was demonstrated that the collected data accurately tracks the wound healing process and the formation of scar tissue.

To minimize the tissue damage that may occur in the process of removing the tracking device after healing, the system integrates biodegradable sensor modules capable of natural decomposition within the body. These biodegradable modules disintegrate within the body after use, thus reducing the risk of additional discomfort or tissue damage upon device removal. Furthermore, the device could one day be used for monitoring inside the wound area as there is no need for removal.

Professor Kyeongha Kwon, who led the research, anticipates that continuous monitoring of wound temperature and heat transfer characteristics will enable medical professionals to more accurately assess the status of diabetic patients' wounds and provide appropriate treatment. He further predicted that the implementation of biodegradable sensors allows for the safe decomposition of the device after wound healing without the need for removal, making live monitoring possible not only in hospitals but also at home.

The research team plans to integrate antimicrobial materials into this device, aiming to expand its technological capabilities to enable the observation and prevention of inflammatory responses, bacterial infections, and other complications. The goal is to provide a multi-purpose wound monitoring platform capable of real-time antimicrobial monitoring in hospitals or homes by detecting changes in temperature and heat transfer characteristics indicative of infection levels.

< Image 1. Image of the bioresorbable temperature sensor >

The results of this study were published on February 19th in the international journal Advanced Healthcare Materials and selected as the inside back cover article, titled "Materials and Device Designs for Wireless Monitoring of Temperature and Thermal Transport Properties of Wound Beds during Healing."

This research was conducted with support from the Basic Research Program, the Regional Innovation Center Program, and the BK21 Program.

2024.03.11 View 9569 -

KAIST to begin Joint Research to Develop Next-Generation LiDAR System with Hyundai Motor Group

< (From left) Jong-Soo Lee, Executive Vice President at Hyundai Motor, Sang-Yup Lee, Senior Vice President for Research at KAIST >

The ‘Hyundai Motor Group-KAIST On-Chip LiDAR Joint Research Lab’ was opened at KAIST’s main campus in Daejeon to develop LiDAR sensors for advanced autonomous vehicles.

The joint research lab aims to develop high-performance and compact on-chip sensors and new signal detection technology, which are essential in the increasingly competitive autonomous driving market. On-chip sensors, which utilize semiconductor manufacturing technology to add various functions, can reduce the size of LiDAR systems compared to conventional methods and secure price competitiveness through mass production using semiconductor fabrication processes.

The joint research lab will consist of about 30 researchers, including the Hyundai-Kia Institute of Advanced Technology Development research team and KAIST professors Sanghyeon Kim, Sangsik Kim, Wanyeong Jung, and Hamza Kurt from KAIST’s School of Electrical Engineering, and will operate for four years until 2028.

KAIST will be leading the specialized work of each research team, such as for the development of silicon optoelectronic on-chip LiDAR components, the fabrication of high-speed, high-power integrated circuits to run the LiDAR systems, and the optimization and verification of LiDAR systems.

Hyundai Motor and Kia, together with Hyundai NGV, a specialized industry-academia cooperation institution, will oversee the operation of the joint research lab and provide support such as monitoring technological trends, suggesting research directions, deriving core ideas, and recommending technologies and experts to enhance research capabilities.

A Hyundai Motor Group official said, "We believe that this cooperation between Hyundai Motor Company and Kia, the leader in autonomous driving technology, and KAIST, the home of world-class technology, will hasten the achievement of fully autonomous driving." He added, "We will do our best to enable the lab to produce tangible results.”

Professor Sanghyeon Kim said, "The LiDAR sensor, which serves as the eyes of a car, is a core technology for future autonomous vehicle development that is essential for automobile companies to internalize."

2024.02.27 View 13230

KAIST to begin Joint Research to Develop Next-Generation LiDAR System with Hyundai Motor Group

< (From left) Jong-Soo Lee, Executive Vice President at Hyundai Motor, Sang-Yup Lee, Senior Vice President for Research at KAIST >

The ‘Hyundai Motor Group-KAIST On-Chip LiDAR Joint Research Lab’ was opened at KAIST’s main campus in Daejeon to develop LiDAR sensors for advanced autonomous vehicles.

The joint research lab aims to develop high-performance and compact on-chip sensors and new signal detection technology, which are essential in the increasingly competitive autonomous driving market. On-chip sensors, which utilize semiconductor manufacturing technology to add various functions, can reduce the size of LiDAR systems compared to conventional methods and secure price competitiveness through mass production using semiconductor fabrication processes.

The joint research lab will consist of about 30 researchers, including the Hyundai-Kia Institute of Advanced Technology Development research team and KAIST professors Sanghyeon Kim, Sangsik Kim, Wanyeong Jung, and Hamza Kurt from KAIST’s School of Electrical Engineering, and will operate for four years until 2028.

KAIST will be leading the specialized work of each research team, such as for the development of silicon optoelectronic on-chip LiDAR components, the fabrication of high-speed, high-power integrated circuits to run the LiDAR systems, and the optimization and verification of LiDAR systems.

Hyundai Motor and Kia, together with Hyundai NGV, a specialized industry-academia cooperation institution, will oversee the operation of the joint research lab and provide support such as monitoring technological trends, suggesting research directions, deriving core ideas, and recommending technologies and experts to enhance research capabilities.

A Hyundai Motor Group official said, "We believe that this cooperation between Hyundai Motor Company and Kia, the leader in autonomous driving technology, and KAIST, the home of world-class technology, will hasten the achievement of fully autonomous driving." He added, "We will do our best to enable the lab to produce tangible results.”

Professor Sanghyeon Kim said, "The LiDAR sensor, which serves as the eyes of a car, is a core technology for future autonomous vehicle development that is essential for automobile companies to internalize."

2024.02.27 View 13230 -

Genome Sequencing Unveils Mutational Impacts of Radiation on Mammalian Cells

Recent release of the waste water from Japan's Fukushima nuclear disaster stirred apprehension regarding the health implications of radiation exposure. Classified as a Group 1 carcinogen, ionizing radiation has long been associated with various cancers and genetic disorders, as evidenced by survivors and descendants of atomic bombings and the Chernobyl disaster. Despite much smaller amount, we remain consistently exposed to low levels of radiation in everyday life and medical procedures.

Radiation, whether in the form of high-energy particles or electromagnetic waves, is conventionally known to break our cellular DNA, leading to cancer and genetic disorders. Yet, our understanding of the quantitative and qualitative mutational impacts of ionizing radiation has been incomplete.

On the 14th, Professor Young Seok Ju and his research team from KAIST, in collaboration with Dr. Tae Gen Son from the Dongnam Institute of Radiological and Medical Science, and Professors Kyung Su Kim and Ji Hyun Chang from Seoul National University, unveiled a breakthrough. Their study, led by joint first authors Drs. Jeonghwan Youk, Hyun Woo Kwon, Joonoh Lim, Eunji Kim and Tae-Woo Kim, titled "Quantitative and qualitative mutational impact of ionizing radiation on normal cells," was published in Cell Genomics.

Employing meticulous techniques, the research team comprehensively analyzed the whole-genome sequences of cells pre- and post-radiation exposure, pinpointing radiation-induced DNA mutations. Experiments involving cells from different organs of humans and mice exposed to varying radiation doses revealed mutation patterns correlating with exposure levels. (Figure 1)

Notably, exposure to 1 Gray (Gy) of radiation resulted in on average 14 mutations in every post-exposure cell. (Figure 2) Unlike other carcinogens, radiation-induced mutations primarily comprised short base deletions and a set of structural variations including inversions, translocations, and various complex genomic rearrangements. (Figure 3) Interestingly, experiments subjecting cells to low radiation dose rate over 100 days demonstrated that mutation quantities, under equivalent total radiation doses, mirrored those of high-dose exposure.

"Through this study, we have clearly elucidated the effects of radiation on cells at the molecular level," said Prof. Ju at KAIST. "Now we understand better how radiation changes the DNA of our cells," he added.

Dr. Son from the Dongnam Institute of Radiological and Medical Science stated, "Based on this study, we will continue to research the effects of very low and very high doses of radiation on the human body," and further remarked, "We will advance the development of safe and effective radiation therapy techniques."

Professors Kim and Chang from Seoul National University College of Medicine expressed their views, saying, "Through this study, we believe we now have a tool to accurately understand the impact of radiation on human DNA," and added, "We hope that many subsequent studies will emerge using the research methodologies employed in this study."

This research represents a significant leap forward in radiation studies, made possible through collaborative efforts and interdisciplinary approaches. This pioneering research engaged scholars from diverse backgrounds, spanning from the Genetic Engineering Research Institute at Seoul National University, the Cambridge Stem Cell Institute in the UK, the Institute for Molecular Biotechnology in Austria (IMBA), and the Genome Insight Inc. (a KAIST spin-off start-up). This study was supported by various institutions including the National Research Foundation of Korea, Dongnam Institute of Radiological and Medical Science (supported by Ministry of Science and ICT, the government of South Korea), the Suh Kyungbae Foundation, the Human Frontier Science Program (HFSP), and the Korea University Anam Hospital Korea Foundation for the Advancement of Science and Creativity, the Ministry of Science and ICT, and the National R&D Program.

2024.02.15 View 10284

Genome Sequencing Unveils Mutational Impacts of Radiation on Mammalian Cells

Recent release of the waste water from Japan's Fukushima nuclear disaster stirred apprehension regarding the health implications of radiation exposure. Classified as a Group 1 carcinogen, ionizing radiation has long been associated with various cancers and genetic disorders, as evidenced by survivors and descendants of atomic bombings and the Chernobyl disaster. Despite much smaller amount, we remain consistently exposed to low levels of radiation in everyday life and medical procedures.

Radiation, whether in the form of high-energy particles or electromagnetic waves, is conventionally known to break our cellular DNA, leading to cancer and genetic disorders. Yet, our understanding of the quantitative and qualitative mutational impacts of ionizing radiation has been incomplete.

On the 14th, Professor Young Seok Ju and his research team from KAIST, in collaboration with Dr. Tae Gen Son from the Dongnam Institute of Radiological and Medical Science, and Professors Kyung Su Kim and Ji Hyun Chang from Seoul National University, unveiled a breakthrough. Their study, led by joint first authors Drs. Jeonghwan Youk, Hyun Woo Kwon, Joonoh Lim, Eunji Kim and Tae-Woo Kim, titled "Quantitative and qualitative mutational impact of ionizing radiation on normal cells," was published in Cell Genomics.

Employing meticulous techniques, the research team comprehensively analyzed the whole-genome sequences of cells pre- and post-radiation exposure, pinpointing radiation-induced DNA mutations. Experiments involving cells from different organs of humans and mice exposed to varying radiation doses revealed mutation patterns correlating with exposure levels. (Figure 1)

Notably, exposure to 1 Gray (Gy) of radiation resulted in on average 14 mutations in every post-exposure cell. (Figure 2) Unlike other carcinogens, radiation-induced mutations primarily comprised short base deletions and a set of structural variations including inversions, translocations, and various complex genomic rearrangements. (Figure 3) Interestingly, experiments subjecting cells to low radiation dose rate over 100 days demonstrated that mutation quantities, under equivalent total radiation doses, mirrored those of high-dose exposure.

"Through this study, we have clearly elucidated the effects of radiation on cells at the molecular level," said Prof. Ju at KAIST. "Now we understand better how radiation changes the DNA of our cells," he added.

Dr. Son from the Dongnam Institute of Radiological and Medical Science stated, "Based on this study, we will continue to research the effects of very low and very high doses of radiation on the human body," and further remarked, "We will advance the development of safe and effective radiation therapy techniques."

Professors Kim and Chang from Seoul National University College of Medicine expressed their views, saying, "Through this study, we believe we now have a tool to accurately understand the impact of radiation on human DNA," and added, "We hope that many subsequent studies will emerge using the research methodologies employed in this study."

This research represents a significant leap forward in radiation studies, made possible through collaborative efforts and interdisciplinary approaches. This pioneering research engaged scholars from diverse backgrounds, spanning from the Genetic Engineering Research Institute at Seoul National University, the Cambridge Stem Cell Institute in the UK, the Institute for Molecular Biotechnology in Austria (IMBA), and the Genome Insight Inc. (a KAIST spin-off start-up). This study was supported by various institutions including the National Research Foundation of Korea, Dongnam Institute of Radiological and Medical Science (supported by Ministry of Science and ICT, the government of South Korea), the Suh Kyungbae Foundation, the Human Frontier Science Program (HFSP), and the Korea University Anam Hospital Korea Foundation for the Advancement of Science and Creativity, the Ministry of Science and ICT, and the National R&D Program.

2024.02.15 View 10284 -

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

Representing Korean Robotics at Sea: KAIST’s 26-month strife rewarded

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

- Team KAIST, composed of students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical and Engineering, came through the challenge as the first runner-up winning the prize money totaling up to $650,000 (KRW 860 million).

- Successfully led the autonomous collaboration of unmanned aerial and maritime vehicles using cutting-edge robotics and AI technology through to the final round of the competition held in Abu Dhabi from January 10 to February 6, 2024.

KAIST (President Kwang-Hyung Lee), reported on the 8th that Team KAIST, led by students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical Engineering, with Pablo Aviation as a partner, won a total prize money of $650,000 (KRW 860 million) at the Maritime Grand Challenge by the Mohamed Bin Zayed International Robotics Challenge (MBZIRC), finishing first runner-up.

This competition, which is the largest ever robotics competition held over water, is sponsored by the government of the United Arab Emirates and organized by ASPIRE, an organization under the Abu Dhabi Ministry of Science, with a total prize money of $3 million.

In the competition, which started at the end of 2021, 52 teams from around the world participated and five teams were selected to go on to the finals in February 2023 after going through the first and second stages of screening. The final round was held from January 10 to February 6, 2024, using actual unmanned ships and drones in a secluded sea area of 10 km2 off the coast of Abu Dhabi, the capital of the United Arab Emirates. A total of 18 KAIST students and Professor Jinwhan Kim and Professor Hyunchul Shim took part in this competition at the location at Abu Dhabi.

Team KAIST will receive $500,000 in prize money for taking second place in the final, and the team’s prize money totals up to $650,000 including $150,000 that was as special midterm award for finalists.

The final mission scenario is to find the target vessel on the run carrying illegal cargoes among many ships moving within the GPS-disabled marine surface, and inspect the deck for two different types of stolen cargo to recover them using the aerial vehicle to bring the small cargo and the robot manipulator topped on an unmanned ship to retrieve the larger one. The true aim of the mission is to complete it through autonomous collaboration of the unmanned ship and the aerial vehicle without human intervention throughout the entire mission process. In particular, since GPS cannot be used in this competition due to regulations, Professor Jinwhan Kim's research team developed autonomous operation techniques for unmanned ships, including searching and navigating methods using maritime radar, and Professor Hyunchul Shim's research team developed video-based navigation and a technology to combine a small autonomous robot with a drone.

The final mission is to retrieve cargo on board a ship fleeing at sea through autonomous collaboration between unmanned ships and unmanned aerial vehicles without human intervention. The overall mission consists the first stage of conducting the inspection to find the target ship among several ships moving at sea and the second stage of conducting the intervention mission to retrieve the cargoes on the deck of the ship. Each team was given a total of three opportunities, and the team that completed the highest-level mission in the shortest time during the three attempts received the highest score.

In the first attempt, KAIST was the only team to succeed in the first stage search mission, but the competition began in earnest as the Croatian team also completed the first stage mission in the second attempt. As the competition schedule was delayed due to strong winds and high waves that continued for several days, the organizers decided to hold the finals with the three teams, including the Team KAIST and the team from Croatia’s the University of Zagreb, which completed the first stage of the mission, and Team Fly-Eagle, a team of researcher from China and UAE that partially completed the first stage. The three teams were given the chance to proceed to the finals and try for the third attempt, and in the final competition, the Croatian team won, KAIST took the second place, and the combined team of UAE-China combined team took the third place. The final prize to be given for the winning team is set at $2 million with $500,000 for the runner-up team, and $250,000 for the third-place.

Professor Jinwhan Kim of the Department of Mechanical Engineering, who served as the advisor for Team KAIST, said, “I would like to express my gratitude and congratulations to the students who put in a huge academic and physical efforts in preparing for the competition over the past two years. I feel rewarded because, regardless of the results, every bit of efforts put into this up to this point will become the base of their confidence and a valuable asset in their growth into a great researcher.” Sol Han, a doctoral student in mechanical engineering who served as the team leader, said, “I am disappointed of how narrowly we missed out on winning at the end, but I am satisfied with the significance of the output we’ve got and I am grateful to the team members who worked hard together for that.”

HD Hyundai, Rainbow Robotics, Avikus, and FIMS also participated as sponsors for Team KAIST's campaign.

2024.02.09 View 15601

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

Representing Korean Robotics at Sea: KAIST’s 26-month strife rewarded

Team KAIST placed among top two at MBZIRC Maritime Grand Challenge

- Team KAIST, composed of students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical and Engineering, came through the challenge as the first runner-up winning the prize money totaling up to $650,000 (KRW 860 million).

- Successfully led the autonomous collaboration of unmanned aerial and maritime vehicles using cutting-edge robotics and AI technology through to the final round of the competition held in Abu Dhabi from January 10 to February 6, 2024.

KAIST (President Kwang-Hyung Lee), reported on the 8th that Team KAIST, led by students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical Engineering, with Pablo Aviation as a partner, won a total prize money of $650,000 (KRW 860 million) at the Maritime Grand Challenge by the Mohamed Bin Zayed International Robotics Challenge (MBZIRC), finishing first runner-up.

This competition, which is the largest ever robotics competition held over water, is sponsored by the government of the United Arab Emirates and organized by ASPIRE, an organization under the Abu Dhabi Ministry of Science, with a total prize money of $3 million.

In the competition, which started at the end of 2021, 52 teams from around the world participated and five teams were selected to go on to the finals in February 2023 after going through the first and second stages of screening. The final round was held from January 10 to February 6, 2024, using actual unmanned ships and drones in a secluded sea area of 10 km2 off the coast of Abu Dhabi, the capital of the United Arab Emirates. A total of 18 KAIST students and Professor Jinwhan Kim and Professor Hyunchul Shim took part in this competition at the location at Abu Dhabi.

Team KAIST will receive $500,000 in prize money for taking second place in the final, and the team’s prize money totals up to $650,000 including $150,000 that was as special midterm award for finalists.

The final mission scenario is to find the target vessel on the run carrying illegal cargoes among many ships moving within the GPS-disabled marine surface, and inspect the deck for two different types of stolen cargo to recover them using the aerial vehicle to bring the small cargo and the robot manipulator topped on an unmanned ship to retrieve the larger one. The true aim of the mission is to complete it through autonomous collaboration of the unmanned ship and the aerial vehicle without human intervention throughout the entire mission process. In particular, since GPS cannot be used in this competition due to regulations, Professor Jinwhan Kim's research team developed autonomous operation techniques for unmanned ships, including searching and navigating methods using maritime radar, and Professor Hyunchul Shim's research team developed video-based navigation and a technology to combine a small autonomous robot with a drone.

The final mission is to retrieve cargo on board a ship fleeing at sea through autonomous collaboration between unmanned ships and unmanned aerial vehicles without human intervention. The overall mission consists the first stage of conducting the inspection to find the target ship among several ships moving at sea and the second stage of conducting the intervention mission to retrieve the cargoes on the deck of the ship. Each team was given a total of three opportunities, and the team that completed the highest-level mission in the shortest time during the three attempts received the highest score.

In the first attempt, KAIST was the only team to succeed in the first stage search mission, but the competition began in earnest as the Croatian team also completed the first stage mission in the second attempt. As the competition schedule was delayed due to strong winds and high waves that continued for several days, the organizers decided to hold the finals with the three teams, including the Team KAIST and the team from Croatia’s the University of Zagreb, which completed the first stage of the mission, and Team Fly-Eagle, a team of researcher from China and UAE that partially completed the first stage. The three teams were given the chance to proceed to the finals and try for the third attempt, and in the final competition, the Croatian team won, KAIST took the second place, and the combined team of UAE-China combined team took the third place. The final prize to be given for the winning team is set at $2 million with $500,000 for the runner-up team, and $250,000 for the third-place.

Professor Jinwhan Kim of the Department of Mechanical Engineering, who served as the advisor for Team KAIST, said, “I would like to express my gratitude and congratulations to the students who put in a huge academic and physical efforts in preparing for the competition over the past two years. I feel rewarded because, regardless of the results, every bit of efforts put into this up to this point will become the base of their confidence and a valuable asset in their growth into a great researcher.” Sol Han, a doctoral student in mechanical engineering who served as the team leader, said, “I am disappointed of how narrowly we missed out on winning at the end, but I am satisfied with the significance of the output we’ve got and I am grateful to the team members who worked hard together for that.”

HD Hyundai, Rainbow Robotics, Avikus, and FIMS also participated as sponsors for Team KAIST's campaign.

2024.02.09 View 15601 -

KAIST Research Team Breaks Down Musical Instincts with AI

Music, often referred to as the universal language, is known to be a common component in all cultures. Then, could ‘musical instinct’ be something that is shared to some degree despite the extensive environmental differences amongst cultures?

On January 16, a KAIST research team led by Professor Hawoong Jung from the Department of Physics announced to have identified the principle by which musical instincts emerge from the human brain without special learning using an artificial neural network model.

Previously, many researchers have attempted to identify the similarities and differences between the music that exist in various different cultures, and tried to understand the origin of the universality. A paper published in Science in 2019 had revealed that music is produced in all ethnographically distinct cultures, and that similar forms of beats and tunes are used. Neuroscientist have also previously found out that a specific part of the human brain, namely the auditory cortex, is responsible for processing musical information.

Professor Jung’s team used an artificial neural network model to show that cognitive functions for music forms spontaneously as a result of processing auditory information received from nature, without being taught music. The research team utilized AudioSet, a large-scale collection of sound data provided by Google, and taught the artificial neural network to learn the various sounds. Interestingly, the research team discovered that certain neurons within the network model would respond selectively to music. In other words, they observed the spontaneous generation of neurons that reacted minimally to various other sounds like those of animals, nature, or machines, but showed high levels of response to various forms of music including both instrumental and vocal.

The neurons in the artificial neural network model showed similar reactive behaviours to those in the auditory cortex of a real brain. For example, artificial neurons responded less to the sound of music that was cropped into short intervals and were rearranged. This indicates that the spontaneously-generated music-selective neurons encode the temporal structure of music. This property was not limited to a specific genre of music, but emerged across 25 different genres including classic, pop, rock, jazz, and electronic.

< Figure 1. Illustration of the musicality of the brain and artificial neural network (created with DALL·E3 AI based on the paper content) >

Furthermore, suppressing the activity of the music-selective neurons was found to greatly impede the cognitive accuracy for other natural sounds. That is to say, the neural function that processes musical information helps process other sounds, and that ‘musical ability’ may be an instinct formed as a result of an evolutionary adaptation acquired to better process sounds from nature.

Professor Hawoong Jung, who advised the research, said, “The results of our study imply that evolutionary pressure has contributed to forming the universal basis for processing musical information in various cultures.” As for the significance of the research, he explained, “We look forward for this artificially built model with human-like musicality to become an original model for various applications including AI music generation, musical therapy, and for research in musical cognition.” He also commented on its limitations, adding, “This research however does not take into consideration the developmental process that follows the learning of music, and it must be noted that this is a study on the foundation of processing musical information in early development.”

< Figure 2. The artificial neural network that learned to recognize non-musical natural sounds in the cyber space distinguishes between music and non-music. >

This research, conducted by first author Dr. Gwangsu Kim of the KAIST Department of Physics (current affiliation: MIT Department of Brain and Cognitive Sciences) and Dr. Dong-Kyum Kim (current affiliation: IBS) was published in Nature Communications under the title, “Spontaneous emergence of rudimentary music detectors in deep neural networks”.

This research was supported by the National Research Foundation of Korea.

2024.01.23 View 9185

KAIST Research Team Breaks Down Musical Instincts with AI

Music, often referred to as the universal language, is known to be a common component in all cultures. Then, could ‘musical instinct’ be something that is shared to some degree despite the extensive environmental differences amongst cultures?

On January 16, a KAIST research team led by Professor Hawoong Jung from the Department of Physics announced to have identified the principle by which musical instincts emerge from the human brain without special learning using an artificial neural network model.

Previously, many researchers have attempted to identify the similarities and differences between the music that exist in various different cultures, and tried to understand the origin of the universality. A paper published in Science in 2019 had revealed that music is produced in all ethnographically distinct cultures, and that similar forms of beats and tunes are used. Neuroscientist have also previously found out that a specific part of the human brain, namely the auditory cortex, is responsible for processing musical information.

Professor Jung’s team used an artificial neural network model to show that cognitive functions for music forms spontaneously as a result of processing auditory information received from nature, without being taught music. The research team utilized AudioSet, a large-scale collection of sound data provided by Google, and taught the artificial neural network to learn the various sounds. Interestingly, the research team discovered that certain neurons within the network model would respond selectively to music. In other words, they observed the spontaneous generation of neurons that reacted minimally to various other sounds like those of animals, nature, or machines, but showed high levels of response to various forms of music including both instrumental and vocal.

The neurons in the artificial neural network model showed similar reactive behaviours to those in the auditory cortex of a real brain. For example, artificial neurons responded less to the sound of music that was cropped into short intervals and were rearranged. This indicates that the spontaneously-generated music-selective neurons encode the temporal structure of music. This property was not limited to a specific genre of music, but emerged across 25 different genres including classic, pop, rock, jazz, and electronic.

< Figure 1. Illustration of the musicality of the brain and artificial neural network (created with DALL·E3 AI based on the paper content) >

Furthermore, suppressing the activity of the music-selective neurons was found to greatly impede the cognitive accuracy for other natural sounds. That is to say, the neural function that processes musical information helps process other sounds, and that ‘musical ability’ may be an instinct formed as a result of an evolutionary adaptation acquired to better process sounds from nature.

Professor Hawoong Jung, who advised the research, said, “The results of our study imply that evolutionary pressure has contributed to forming the universal basis for processing musical information in various cultures.” As for the significance of the research, he explained, “We look forward for this artificially built model with human-like musicality to become an original model for various applications including AI music generation, musical therapy, and for research in musical cognition.” He also commented on its limitations, adding, “This research however does not take into consideration the developmental process that follows the learning of music, and it must be noted that this is a study on the foundation of processing musical information in early development.”

< Figure 2. The artificial neural network that learned to recognize non-musical natural sounds in the cyber space distinguishes between music and non-music. >

This research, conducted by first author Dr. Gwangsu Kim of the KAIST Department of Physics (current affiliation: MIT Department of Brain and Cognitive Sciences) and Dr. Dong-Kyum Kim (current affiliation: IBS) was published in Nature Communications under the title, “Spontaneous emergence of rudimentary music detectors in deep neural networks”.

This research was supported by the National Research Foundation of Korea.

2024.01.23 View 9185 -

KAIST Research team develops anti-icing film that only requires sunlight

A KAIST research team has developed an anti-icing and de-icing film coating technology that can apply the photothermal effect of gold nanoparticles to industrial sites without the need for heating wires, periodic spray or oil coating of anti-freeze substances, and substrate design alterations.

The group led by Professor Hyoungsoo Kim from the Department of Mechanical Engineering (Fluid & Interface Laboratory) and Professor Dong Ki Yoon from the Department of Chemistry (Soft Material Assembly Group) revealed on January 3 to have together developed an original technique that can uniformly pattern gold nanorod (GNR) particles in quadrants through simple evaporation, and have used this to develop an anti-icing and de-icing surface.

Many scientists in recent years have tried to control substrate surfaces through various coating techniques, and those involving the patterning of functional nanomaterials have gained special attention. In particular, GNR is considered a promising candidate nanomaterial for its biocompatibility, chemical stability, relatively simple synthesis, and its stable and unique property of surface plasmon resonance. To maximize the performance of GNR, it is important to achieve a high uniformity during film deposition, and a high level of rod alignment. However, achieving both criteria has thus far been a difficult challenge.

< Figure 1. Conceptual image to display Hydrodynamic mechanisms for the formation of a homogeneous quadrant cellulose nanocrystal(CNC) matrix. >

To solve this, the joint research team utilized cellulose nanocrystal (CNC), a next-generation functional nanomaterial that can easily be extracted from nature. By co-assembling GNR on CNC quadrant templates, the team could uniformly dry the film and successfully obtain a GNR film with a uniform alignment in a ring-shape. Compared to existing coffee-ring films, the highly uniform and aligned GNR film developed through this research showed enhanced plasmonic photothermal properties, and the team showed that it could carry out anti-icing and de-icing functions by simply irradiating light in the visible wavelength range.

< Figure 2. Optical and thermal performance evaluation results of gold nanorod film and demonstration of plasmonic heater for anti-icing and de-icing. >

Professor Hyoungsoo Kim said, “This technique can be applied to plastic, as well as flexible surfaces. By using it on exterior materials and films, it can generate its own heat energy, which would greatly save energy through voluntary thermal energy harvesting across various applications including cars, aircrafts, and windows in residential or commercial spaces, where frosting becomes a serious issue in the winter.” Professor Dong Ki Yoon added, “This research is significant in that we can now freely pattern the CNC-GNR composite, which was previously difficult to create into films, over a large area. We can utilize this as an anti-icing material, and if we were to take advantage of the plasmonic properties of gold, we can also use it like stained-glass to decorate glass surfaces.”

This research was conducted by Ph.D. candidate Jeongsu Pyeon from the Department of Mechanical Engineering, and his co-first author Dr. Soon Mo Park (a KAIST graduate, currently a post-doctoral associate at Cornell University), and was pushed in the online volume of Nature Communication on December 8, 2023 under the title “Plasmonic Metasurfaces of Cellulose Nanocrystal Matrices with Quadrants of Aligned Gold Nanorods for Photothermal Anti-Icing." Recognized for its achievement, the research was also selected as an editor’s highlight for the journals Materials Science and Chemistry, and Inorganic and Physical Chemistry.

This research was supported by the Individual Basic Mid-Sized Research Fund from the National Research Foundation of Korea and the Center for Multiscale Chiral Architectures.

2024.01.16 View 12879

KAIST Research team develops anti-icing film that only requires sunlight

A KAIST research team has developed an anti-icing and de-icing film coating technology that can apply the photothermal effect of gold nanoparticles to industrial sites without the need for heating wires, periodic spray or oil coating of anti-freeze substances, and substrate design alterations.

The group led by Professor Hyoungsoo Kim from the Department of Mechanical Engineering (Fluid & Interface Laboratory) and Professor Dong Ki Yoon from the Department of Chemistry (Soft Material Assembly Group) revealed on January 3 to have together developed an original technique that can uniformly pattern gold nanorod (GNR) particles in quadrants through simple evaporation, and have used this to develop an anti-icing and de-icing surface.

Many scientists in recent years have tried to control substrate surfaces through various coating techniques, and those involving the patterning of functional nanomaterials have gained special attention. In particular, GNR is considered a promising candidate nanomaterial for its biocompatibility, chemical stability, relatively simple synthesis, and its stable and unique property of surface plasmon resonance. To maximize the performance of GNR, it is important to achieve a high uniformity during film deposition, and a high level of rod alignment. However, achieving both criteria has thus far been a difficult challenge.

< Figure 1. Conceptual image to display Hydrodynamic mechanisms for the formation of a homogeneous quadrant cellulose nanocrystal(CNC) matrix. >

To solve this, the joint research team utilized cellulose nanocrystal (CNC), a next-generation functional nanomaterial that can easily be extracted from nature. By co-assembling GNR on CNC quadrant templates, the team could uniformly dry the film and successfully obtain a GNR film with a uniform alignment in a ring-shape. Compared to existing coffee-ring films, the highly uniform and aligned GNR film developed through this research showed enhanced plasmonic photothermal properties, and the team showed that it could carry out anti-icing and de-icing functions by simply irradiating light in the visible wavelength range.

< Figure 2. Optical and thermal performance evaluation results of gold nanorod film and demonstration of plasmonic heater for anti-icing and de-icing. >

Professor Hyoungsoo Kim said, “This technique can be applied to plastic, as well as flexible surfaces. By using it on exterior materials and films, it can generate its own heat energy, which would greatly save energy through voluntary thermal energy harvesting across various applications including cars, aircrafts, and windows in residential or commercial spaces, where frosting becomes a serious issue in the winter.” Professor Dong Ki Yoon added, “This research is significant in that we can now freely pattern the CNC-GNR composite, which was previously difficult to create into films, over a large area. We can utilize this as an anti-icing material, and if we were to take advantage of the plasmonic properties of gold, we can also use it like stained-glass to decorate glass surfaces.”

This research was conducted by Ph.D. candidate Jeongsu Pyeon from the Department of Mechanical Engineering, and his co-first author Dr. Soon Mo Park (a KAIST graduate, currently a post-doctoral associate at Cornell University), and was pushed in the online volume of Nature Communication on December 8, 2023 under the title “Plasmonic Metasurfaces of Cellulose Nanocrystal Matrices with Quadrants of Aligned Gold Nanorods for Photothermal Anti-Icing." Recognized for its achievement, the research was also selected as an editor’s highlight for the journals Materials Science and Chemistry, and Inorganic and Physical Chemistry.

This research was supported by the Individual Basic Mid-Sized Research Fund from the National Research Foundation of Korea and the Center for Multiscale Chiral Architectures.

2024.01.16 View 12879 -

KAIST Demonstrates AI and sustainable technologies at CES 2024

On January 2, KAIST announced it will be participating in the Consumer Electronics Show (CES) 2024, held between January 9 and 12.

CES 2024 is one of the world’s largest tech conferences to take place in Las Vegas. Under the slogan “KAIST, the Global Value Creator” for its exhibition, KAIST has submitted technologies falling under one of following themes: “Expansion of Human Intelligence, Mobility, and Reality”, and “Pursuit of Human Security and Sustainable Development”.

24 startups and pre-startups whose technologies stand out in various fields including artificial intelligence (AI), mobility, virtual reality, healthcare and human security, and sustainable development, will welcome their visitors at an exclusive booth of 232 m2 prepared for KAIST at Eureka Park in Las Vegas.

12 businesses will participate in the first category, “Expansion of Human Intelligence, Mobility, and Reality”, including MicroPix, Panmnesia, DeepAuto, MGL, Reports, Narnia Labs, EL FACTORY, Korea Position Technology, AudAi, Planby Technologies, Movin, and Studio Lab.

In the “Pursuit of Human Security and Sustainable Development” category, 12 businesses including Aldaver, ADNC, Solve, Iris, Blue Device, Barreleye, TR, A2US, Greeners, Iron Boys, Shard Partners and Kingbot, will be introduced.

In particular, Aldaver is a startup that received the Korean Business Award 2023 as well as the presidential award at the Challenge K-Startup with its biomimetic material and printing technology. It has attracted 4.5 billion KRW of investment thus far.

Narnia Labs, with its AI design solution for manufacturing, won the grand prize for K-tech Startups 2022, and has so far attracted 3.5 billion KRW of investments.

Panmnesia is a startup that won the 2024 CES Innovation Award, recognized for their fab-less AI semiconductor technology. They attracted 16 billion KRW of investment through seed round alone.

Meanwhile, student startups will also be presented during the exhibition. Studio Lab received a CES 2024 Best of Innovation Award in the AI category. The team developed the software Seller Canvas, which automatically generates a page for product details when a user uploads an image of a product.

The central stage at the KAIST exhibition booth will be used to interview members of the participating startups between Jan 9 to 11, as well as a networking site for businesses and invited investors during KAIST NIGHT on the evening of 10th, between 5 and 7 PM.

Director Sung-Yool Choi of the KAIST Institute of Technology Value Creation said, “Through CES 2024, KAIST will overcome the limits of human intelligence, mobility, and space with the deep-tech based technologies developed by its startups, and will demonstrate its achievements for realizing its vision as a global value-creating university through the solutions for human security and sustainable development.”

2024.01.05 View 13068

KAIST Demonstrates AI and sustainable technologies at CES 2024

On January 2, KAIST announced it will be participating in the Consumer Electronics Show (CES) 2024, held between January 9 and 12.

CES 2024 is one of the world’s largest tech conferences to take place in Las Vegas. Under the slogan “KAIST, the Global Value Creator” for its exhibition, KAIST has submitted technologies falling under one of following themes: “Expansion of Human Intelligence, Mobility, and Reality”, and “Pursuit of Human Security and Sustainable Development”.

24 startups and pre-startups whose technologies stand out in various fields including artificial intelligence (AI), mobility, virtual reality, healthcare and human security, and sustainable development, will welcome their visitors at an exclusive booth of 232 m2 prepared for KAIST at Eureka Park in Las Vegas.

12 businesses will participate in the first category, “Expansion of Human Intelligence, Mobility, and Reality”, including MicroPix, Panmnesia, DeepAuto, MGL, Reports, Narnia Labs, EL FACTORY, Korea Position Technology, AudAi, Planby Technologies, Movin, and Studio Lab.

In the “Pursuit of Human Security and Sustainable Development” category, 12 businesses including Aldaver, ADNC, Solve, Iris, Blue Device, Barreleye, TR, A2US, Greeners, Iron Boys, Shard Partners and Kingbot, will be introduced.

In particular, Aldaver is a startup that received the Korean Business Award 2023 as well as the presidential award at the Challenge K-Startup with its biomimetic material and printing technology. It has attracted 4.5 billion KRW of investment thus far.

Narnia Labs, with its AI design solution for manufacturing, won the grand prize for K-tech Startups 2022, and has so far attracted 3.5 billion KRW of investments.

Panmnesia is a startup that won the 2024 CES Innovation Award, recognized for their fab-less AI semiconductor technology. They attracted 16 billion KRW of investment through seed round alone.

Meanwhile, student startups will also be presented during the exhibition. Studio Lab received a CES 2024 Best of Innovation Award in the AI category. The team developed the software Seller Canvas, which automatically generates a page for product details when a user uploads an image of a product.

The central stage at the KAIST exhibition booth will be used to interview members of the participating startups between Jan 9 to 11, as well as a networking site for businesses and invited investors during KAIST NIGHT on the evening of 10th, between 5 and 7 PM.

Director Sung-Yool Choi of the KAIST Institute of Technology Value Creation said, “Through CES 2024, KAIST will overcome the limits of human intelligence, mobility, and space with the deep-tech based technologies developed by its startups, and will demonstrate its achievements for realizing its vision as a global value-creating university through the solutions for human security and sustainable development.”

2024.01.05 View 13068 -

A KAIST Research Team Develops High-Performance Stretchable Solar Cells

With the market for wearable electric devices growing rapidly, stretchable solar cells that can function under strain have received considerable attention as an energy source. To build such solar cells, it is necessary that their photoactive layer, which converts light into electricity, shows high electrical performance while possessing mechanical elasticity. However, satisfying both of these two requirements is challenging, making stretchable solar cells difficult to develop.

On December 26, a KAIST research team from the Department of Chemical and Biomolecular Engineering (CBE) led by Professor Bumjoon Kim announced the development of a new conductive polymer material that achieved both high electrical performance and elasticity while introducing the world’s highest-performing stretchable organic solar cell.

Organic solar cells are devices whose photoactive layer, which is responsible for the conversion of light into electricity, is composed of organic materials. Compared to existing non-organic material-based solar cells, they are lighter and flexible, making them highly applicable for wearable electrical devices. Solar cells as an energy source are particularly important for building electrical devices, but high-efficiency solar cells often lack flexibility, and their application in wearable devices have therefore been limited to this point.

The team led by Professor Kim conjugated a highly stretchable polymer to an electrically conductive polymer with excellent electrical properties through chemical bonding, and developed a new conductive polymer with both electrical conductivity and mechanical stretchability. This polymer meets the highest reported level of photovoltaic conversion efficiency (19%) using organic solar cells, while also showing 10 times the stretchability of existing devices. The team thereby built the world’s highest performing stretchable solar cell that can be stretched up to 40% during operation, and demonstrated its applicability for wearable devices.

< Figure 1. Chemical structure of the newly developed conductive polymer and performance of stretchable organic solar cells using the material. >

Professor Kim said, “Through this research, we not only developed the world’s best performing stretchable organic solar cell, but it is also significant that we developed a new polymer that can be applicable as a base material for various electronic devices that needs to be malleable and/or elastic.”

< Figure 2. Photovoltaic efficiency and mechanical stretchability of newly developed polymers compared to existing polymers. >

This research, conducted by KAIST researchers Jin-Woo Lee and Heung-Goo Lee as first co-authors in cooperation with teams led by Professor Taek-Soo Kim from the Department of Mechanical Engineering and Professor Sheng Li from the Department of CBE, was published in Joule on December 1 (Paper Title: Rigid and Soft Block-Copolymerized Conjugated Polymers Enable High-Performance Intrinsically-Stretchable Organic Solar Cells).

This research was supported by the National Research Foundation of Korea.

2024.01.04 View 10597

A KAIST Research Team Develops High-Performance Stretchable Solar Cells

With the market for wearable electric devices growing rapidly, stretchable solar cells that can function under strain have received considerable attention as an energy source. To build such solar cells, it is necessary that their photoactive layer, which converts light into electricity, shows high electrical performance while possessing mechanical elasticity. However, satisfying both of these two requirements is challenging, making stretchable solar cells difficult to develop.

On December 26, a KAIST research team from the Department of Chemical and Biomolecular Engineering (CBE) led by Professor Bumjoon Kim announced the development of a new conductive polymer material that achieved both high electrical performance and elasticity while introducing the world’s highest-performing stretchable organic solar cell.

Organic solar cells are devices whose photoactive layer, which is responsible for the conversion of light into electricity, is composed of organic materials. Compared to existing non-organic material-based solar cells, they are lighter and flexible, making them highly applicable for wearable electrical devices. Solar cells as an energy source are particularly important for building electrical devices, but high-efficiency solar cells often lack flexibility, and their application in wearable devices have therefore been limited to this point.

The team led by Professor Kim conjugated a highly stretchable polymer to an electrically conductive polymer with excellent electrical properties through chemical bonding, and developed a new conductive polymer with both electrical conductivity and mechanical stretchability. This polymer meets the highest reported level of photovoltaic conversion efficiency (19%) using organic solar cells, while also showing 10 times the stretchability of existing devices. The team thereby built the world’s highest performing stretchable solar cell that can be stretched up to 40% during operation, and demonstrated its applicability for wearable devices.

< Figure 1. Chemical structure of the newly developed conductive polymer and performance of stretchable organic solar cells using the material. >

Professor Kim said, “Through this research, we not only developed the world’s best performing stretchable organic solar cell, but it is also significant that we developed a new polymer that can be applicable as a base material for various electronic devices that needs to be malleable and/or elastic.”

< Figure 2. Photovoltaic efficiency and mechanical stretchability of newly developed polymers compared to existing polymers. >

This research, conducted by KAIST researchers Jin-Woo Lee and Heung-Goo Lee as first co-authors in cooperation with teams led by Professor Taek-Soo Kim from the Department of Mechanical Engineering and Professor Sheng Li from the Department of CBE, was published in Joule on December 1 (Paper Title: Rigid and Soft Block-Copolymerized Conjugated Polymers Enable High-Performance Intrinsically-Stretchable Organic Solar Cells).

This research was supported by the National Research Foundation of Korea.

2024.01.04 View 10597 -

Center for Global Strategies and Planning Hosts Successful Virtual KAIST U.S. Alumni Connection Event

< Screen capture of the KAIST U.S. Alumni meeting held online on December 8 >

On December 8th, the Center for Global Strategies and Planning at KAIST, led by Vice President Man-Sung Yim of the International Office, conducted a virtual event to bring together KAIST alumni in the United States. The purpose of this event was to showcase KAIST's current initiatives in the U.S., facilitate information exchanges among U.S. alumni, and foster networking opportunities. Over 130 KAIST alumni based in the U.S. registered and attended the event.

The event began with a warm welcome from President Kwang-Hyung Lee, followed by a presentation from Vice President Man-Sung Yim on the current status and vision of KAIST's U.S. collaboration project as well as that of KAIST U.S. Foundation, Inc. Additionally, a distinguished KAIST alumnus, Seok-Hyun Yun, a professor from Harvard Medical School, delivered a keynote speech that highlighted the development of collaborative projects between KAIST and the United States. Alumni Hyun Gook Yoon, a manager at Ford Motor Company, and Eunkwang Joo, CEO of Wasder, also presented recent technological trends in the fields of batteries and blockchain, respectively.

President Kwang-Hyung Lee said, "This event serves as a crucial opportunity to enhance exchanges between KAIST and the U.S., playing a pivotal role in expanding KAIST's global presence." The event also featured small group discussions and networking sessions focusing on revitalizing collaborative efforts between KAIST and the United States.

After the small group discussions, a KAIST alumna and the current president of the Boston KAIST Alumni Association, Jiyoung Lee, shared her belief that the event will provide a meaningful opportunity for KAIST alumni from across the U.S. to come together and build a strong alumni community. Vice President Man-Sung Yim said, "Because collaboration with KAIST alumni in the U.S. is essential for the development of KAIST and innovative science and technology at the global level, we are committed to sustainably organizing meaningful events."

This virtual event for KAIST U.S. alumni has set a new milestone for global networking, marking the beginning of future collaborations and development.

2023.12.08 View 7996

Center for Global Strategies and Planning Hosts Successful Virtual KAIST U.S. Alumni Connection Event

< Screen capture of the KAIST U.S. Alumni meeting held online on December 8 >

On December 8th, the Center for Global Strategies and Planning at KAIST, led by Vice President Man-Sung Yim of the International Office, conducted a virtual event to bring together KAIST alumni in the United States. The purpose of this event was to showcase KAIST's current initiatives in the U.S., facilitate information exchanges among U.S. alumni, and foster networking opportunities. Over 130 KAIST alumni based in the U.S. registered and attended the event.

The event began with a warm welcome from President Kwang-Hyung Lee, followed by a presentation from Vice President Man-Sung Yim on the current status and vision of KAIST's U.S. collaboration project as well as that of KAIST U.S. Foundation, Inc. Additionally, a distinguished KAIST alumnus, Seok-Hyun Yun, a professor from Harvard Medical School, delivered a keynote speech that highlighted the development of collaborative projects between KAIST and the United States. Alumni Hyun Gook Yoon, a manager at Ford Motor Company, and Eunkwang Joo, CEO of Wasder, also presented recent technological trends in the fields of batteries and blockchain, respectively.

President Kwang-Hyung Lee said, "This event serves as a crucial opportunity to enhance exchanges between KAIST and the U.S., playing a pivotal role in expanding KAIST's global presence." The event also featured small group discussions and networking sessions focusing on revitalizing collaborative efforts between KAIST and the United States.

After the small group discussions, a KAIST alumna and the current president of the Boston KAIST Alumni Association, Jiyoung Lee, shared her belief that the event will provide a meaningful opportunity for KAIST alumni from across the U.S. to come together and build a strong alumni community. Vice President Man-Sung Yim said, "Because collaboration with KAIST alumni in the U.S. is essential for the development of KAIST and innovative science and technology at the global level, we are committed to sustainably organizing meaningful events."

This virtual event for KAIST U.S. alumni has set a new milestone for global networking, marking the beginning of future collaborations and development.

2023.12.08 View 7996 -

North Korea and Beyond: AI-Powered Satellite Analysis Reveals the Unseen Economic Landscape of Underdeveloped Nations

- A joint research team in computer science, economics, and geography has developed an artificial intelligence (AI) technology to measure grid-level economic development within six-square-kilometer regions.

- This AI technology is applicable in regions with limited statistical data (e.g., North Korea), supporting international efforts to propose policies for economic growth and poverty reduction in underdeveloped countries.

- The research team plans to make this technology freely available for use to contribute to the United Nations' Sustainable Development Goals (SDGs).

The United Nations reports that more than 700 million people are in extreme poverty, earning less than two dollars a day. However, an accurate assessment of poverty remains a global challenge. For example, 53 countries have not conducted agricultural surveys in the past 15 years, and 17 countries have not published a population census. To fill this data gap, new technologies are being explored to estimate poverty using alternative sources such as street views, aerial photos, and satellite images.

The paper published in Nature Communications demonstrates how artificial intelligence (AI) can help analyze economic conditions from daytime satellite imagery. This new technology can even apply to the least developed countries - such as North Korea - that do not have reliable statistical data for typical machine learning training.

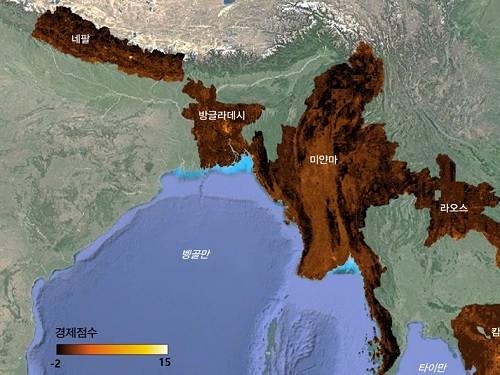

The researchers used Sentinel-2 satellite images from the European Space Agency (ESA) that are publicly available. They split these images into small six-square-kilometer grids. At this zoom level, visual information such as buildings, roads, and greenery can be used to quantify economic indicators. As a result, the team obtained the first ever fine-grained economic map of regions like North Korea. The same algorithm was applied to other underdeveloped countries in Asia: North Korea, Nepal, Laos, Myanmar, Bangladesh, and Cambodia (see Image 1).

The key feature of their research model is the "human-machine collaborative approach," which lets researchers combine human input with AI predictions for areas with scarce data. In this research, ten human experts compared satellite images and judged the economic conditions in the area, with the AI learning from this human data and giving economic scores to each image. The results showed that the Human-AI collaborative approach outperformed machine-only learning algorithms.

< Image 1. Nightlight satellite images of North Korea (Top-left: Background photo provided by NASA's Earth Observatory). South Korea appears brightly lit compared to North Korea, which is mostly dark except for Pyongyang. In contrast, the model developed by the research team uses daytime satellite imagery to predict more detailed economic predictions for North Korea (top-right) and five Asian countries (Bottom: Background photo from Google Earth). >

The research was led by an interdisciplinary team of computer scientists, economists, and a geographer from KAIST & IBS (Donghyun Ahn, Meeyoung Cha, Jihee Kim), Sogang University (Hyunjoo Yang), HKUST (Sangyoon Park), and NUS (Jeasurk Yang). Dr Charles Axelsson, Associate Editor at Nature Communications, handled this paper during the peer review process at the journal.

The research team found that the scores showed a strong correlation with traditional socio-economic metrics such as population density, employment, and number of businesses. This demonstrates the wide applicability and scalability of the approach, particularly in data-scarce countries. Furthermore, the model's strength lies in its ability to detect annual changes in economic conditions at a more detailed geospatial level without using any survey data (see Image 2).

< Image 2. Differences in satellite imagery and economic scores in North Korea between 2016 and 2019. Significant development was found in the Wonsan Kalma area (top), one of the tourist development zones, but no changes were observed in the Wiwon Industrial Development Zone (bottom). (Background photo: Sentinel-2 satellite imagery provided by the European Space Agency (ESA)). >

This model would be especially valuable for rapidly monitoring the progress of Sustainable Development Goals such as reducing poverty and promoting more equitable and sustainable growth on an international scale. The model can also be adapted to measure various social and environmental indicators. For example, it can be trained to identify regions with high vulnerability to climate change and disasters to provide timely guidance on disaster relief efforts.

As an example, the researchers explored how North Korea changed before and after the United Nations sanctions against the country. By applying the model to satellite images of North Korea both in 2016 and in 2019, the researchers discovered three key trends in the country's economic development between 2016 and 2019. First, economic growth in North Korea became more concentrated in Pyongyang and major cities, exacerbating the urban-rural divide. Second, satellite imagery revealed significant changes in areas designated for tourism and economic development, such as new building construction and other meaningful alterations. Third, traditional industrial and export development zones showed relatively minor changes.

Meeyoung Cha, a data scientist in the team explained, "This is an important interdisciplinary effort to address global challenges like poverty. We plan to apply our AI algorithm to other international issues, such as monitoring carbon emissions, disaster damage detection, and the impact of climate change."

An economist on the research team, Jihee Kim, commented that this approach would enable detailed examinations of economic conditions in the developing world at a low cost, reducing data disparities between developed and developing nations. She further emphasized that this is most essential because many public policies require economic measurements to achieve their goals, whether they are for growth, equality, or sustainability.

The research team has made the source code publicly available via GitHub and plans to continue improving the technology, applying it to new satellite images updated annually. The results of this study, with Ph.D. candidate Donghyun Ahn at KAIST and Ph.D. candidate Jeasurk Yang at NUS as joint first authors, were published in Nature Communications under the title "A human-machine collaborative approach measures economic development using satellite imagery."

< Photos of the main authors. 1. Donghyun Ahn, PhD candidate at KAIST School of Computing 2. Jeasurk Yang, PhD candidate at the Department of Geography of National University of Singapore 3. Meeyoung Cha, Professor of KAIST School of Computing and CI at IBS 4. Jihee Kim, Professor of KAIST School of Business and Technology Management 5. Sangyoon Park, Professor of the Division of Social Science at Hong Kong University of Science and Technology 6. Hyunjoo Yang, Professor of the Department of Economics at Sogang University >

2023.12.07 View 10602

North Korea and Beyond: AI-Powered Satellite Analysis Reveals the Unseen Economic Landscape of Underdeveloped Nations

- A joint research team in computer science, economics, and geography has developed an artificial intelligence (AI) technology to measure grid-level economic development within six-square-kilometer regions.

- This AI technology is applicable in regions with limited statistical data (e.g., North Korea), supporting international efforts to propose policies for economic growth and poverty reduction in underdeveloped countries.

- The research team plans to make this technology freely available for use to contribute to the United Nations' Sustainable Development Goals (SDGs).

The United Nations reports that more than 700 million people are in extreme poverty, earning less than two dollars a day. However, an accurate assessment of poverty remains a global challenge. For example, 53 countries have not conducted agricultural surveys in the past 15 years, and 17 countries have not published a population census. To fill this data gap, new technologies are being explored to estimate poverty using alternative sources such as street views, aerial photos, and satellite images.

The paper published in Nature Communications demonstrates how artificial intelligence (AI) can help analyze economic conditions from daytime satellite imagery. This new technology can even apply to the least developed countries - such as North Korea - that do not have reliable statistical data for typical machine learning training.

The researchers used Sentinel-2 satellite images from the European Space Agency (ESA) that are publicly available. They split these images into small six-square-kilometer grids. At this zoom level, visual information such as buildings, roads, and greenery can be used to quantify economic indicators. As a result, the team obtained the first ever fine-grained economic map of regions like North Korea. The same algorithm was applied to other underdeveloped countries in Asia: North Korea, Nepal, Laos, Myanmar, Bangladesh, and Cambodia (see Image 1).

The key feature of their research model is the "human-machine collaborative approach," which lets researchers combine human input with AI predictions for areas with scarce data. In this research, ten human experts compared satellite images and judged the economic conditions in the area, with the AI learning from this human data and giving economic scores to each image. The results showed that the Human-AI collaborative approach outperformed machine-only learning algorithms.

< Image 1. Nightlight satellite images of North Korea (Top-left: Background photo provided by NASA's Earth Observatory). South Korea appears brightly lit compared to North Korea, which is mostly dark except for Pyongyang. In contrast, the model developed by the research team uses daytime satellite imagery to predict more detailed economic predictions for North Korea (top-right) and five Asian countries (Bottom: Background photo from Google Earth). >

The research was led by an interdisciplinary team of computer scientists, economists, and a geographer from KAIST & IBS (Donghyun Ahn, Meeyoung Cha, Jihee Kim), Sogang University (Hyunjoo Yang), HKUST (Sangyoon Park), and NUS (Jeasurk Yang). Dr Charles Axelsson, Associate Editor at Nature Communications, handled this paper during the peer review process at the journal.

The research team found that the scores showed a strong correlation with traditional socio-economic metrics such as population density, employment, and number of businesses. This demonstrates the wide applicability and scalability of the approach, particularly in data-scarce countries. Furthermore, the model's strength lies in its ability to detect annual changes in economic conditions at a more detailed geospatial level without using any survey data (see Image 2).

< Image 2. Differences in satellite imagery and economic scores in North Korea between 2016 and 2019. Significant development was found in the Wonsan Kalma area (top), one of the tourist development zones, but no changes were observed in the Wiwon Industrial Development Zone (bottom). (Background photo: Sentinel-2 satellite imagery provided by the European Space Agency (ESA)). >

This model would be especially valuable for rapidly monitoring the progress of Sustainable Development Goals such as reducing poverty and promoting more equitable and sustainable growth on an international scale. The model can also be adapted to measure various social and environmental indicators. For example, it can be trained to identify regions with high vulnerability to climate change and disasters to provide timely guidance on disaster relief efforts.

As an example, the researchers explored how North Korea changed before and after the United Nations sanctions against the country. By applying the model to satellite images of North Korea both in 2016 and in 2019, the researchers discovered three key trends in the country's economic development between 2016 and 2019. First, economic growth in North Korea became more concentrated in Pyongyang and major cities, exacerbating the urban-rural divide. Second, satellite imagery revealed significant changes in areas designated for tourism and economic development, such as new building construction and other meaningful alterations. Third, traditional industrial and export development zones showed relatively minor changes.